Grizzlyware — Digital transformation and critical infrastructure

Grizzlyware is a company specialising in digital transformation services, custom software and tailored product features. It builds unique bridges between technology platforms to modernise digital systems, allowing its customers to streamline their workflows and improve the user experience.

As part of a Microsoft Storage Spaces Direct (S2D) converged network project, Grizzlyware operates a central data network comprising 120 virtual machines — mainly domain controllers, file servers and terminal servers — used by 600 employees. Traffic migrations take place live between Hyper-V hosts. The infrastructure requires robust fibre optic cables suited to the demanding environment of a datacenter.

Four critical constraints to solve

Storage Spaces Direct (S2D) traffic reaches 50 TB in total across four virtual machines. Each VM requires 4 links to the switch — the switch must deliver throughput, switching capacity and latency suited to this load.

Traffic originates from 4 virtual hosts with a mix of S2D flows, management traffic, backups and inter-VM networking. High availability is essential: each host must be connected to each of the two redundant switches.

Nightly backups generate additional traffic peaks. The inter-switch connection must be planned carefully to absorb these loads without affecting the availability of production services.

The datacenter environment is particularly demanding for cables: mechanical stress, temperatures, installation density. Long-term durability is a non-negotiable criterion.

Armoured OS2 fibre architecture with MLAG for high availability

The solution is built on a switch with 1.44 Tbps capacity, a throughput of 1,071 Mpps and a latency of 612 ns — enough headroom to absorb all of the 50 TB of S2D traffic.

Sixteen 10G links in armoured LC/UPC to LC/UPC OS2 fibre optic cable (four per server) are set up between the switches and the servers fitted with 10G network cards. Since each host is connected to both redundant switches, MLAG (Multi-chassis Link Aggregation) is deployed — the two independent physical switches operate as a single logical switch, ensuring service continuity in the event of a switch failure.

A reliable and long-lasting datacenter infrastructure

The deployment of Elfcam armoured fibre optic cables allowed Grizzlyware to meet every technical constraint — throughput, redundancy, mechanical durability — while ensuring lossless transmission across the 16 10G links in the dense datacenter environment. The MLAG setup delivers the high availability required for the 600 connected users.

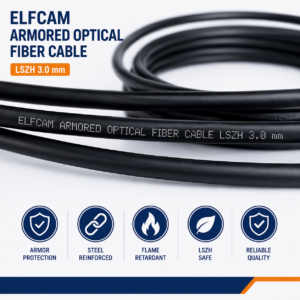

Armoured fibre optic cables used in this project

The selected cables combine a steel armour for mechanical strength in the datacenter, an LSZH jacket for fire safety compliance, and APC-grade polished LC/UPC connectors to minimise reflection losses.

-

Promo !

Cavo in fibra ottica in acciaio armato per esterni e interni da LC/UPC a LC/UPC multimodale duplex OM3 nero (Rif:4511)

57 comments 10M – 300M Le prix initial était : 23,99 €.21,99 €Le prix actuel est : 21,99 €. + Ce produit a plusieurs variations. Les options peuvent être choisies sur la page du produit -

Promo !

Elfcam® – Cavo in fibra ottica in acciaio armato per LC/UPC per esterni e interni a LC/UPC OS2 Duplex monomodale 9/125μm LSZH, Nero (Rif:5221)

33 comments 10M – 300M Le prix initial était : 19,99 €.17,99 €Le prix actuel est : 17,99 €. + Ce produit a plusieurs variations. Les options peuvent être choisies sur la page du produit